Hellas: Trustless Tensor Compute Through Economic Guarantees

Key Takeaways

- •Deterministic execution makes any incorrect computation provable through succinct fraud proofs

- •Collateral-backed commitments make honest execution the rational strategy

- •Most jobs settle off-chain, secured by a credible threat of verification

- •As adoption grows, deeper capital and cheaper verification strengthen security

Hellas: Trustless Tensor Compute Through Economic Guarantees

TL;DR: Tensor compute underpins modern AI. Hellas makes it trustless, unlocking a liquid market for intelligence. Clients request jobs, providers execute them off-chain and post collateral as a commitment to correct execution. Deterministic execution makes incorrect computation provable and enforceable on-chain. Because cheating carries negative expected value, honest execution becomes the rational strategy.

The Missing Piece in AI Compute Markets

Tensor compute is the high-performance execution of tensor operations and it powers modern machine learning systems, from model inference to training. As demand for AI workloads grows, the execution of this compute is increasingly outsourced. Today, there is no shortage of ways to access GPUs. Centralized AI clouds offer powerful infrastructure and predictable operations, for example CoreWeave, Lambda, AWS, Google Cloud, Microsoft Azure, and more.

In parallel, decentralized and marketplace-style networks aggregate GPU supply from many independent operators, often at competitive prices, such as Akash Network or io.net; while aggregators such as OpenRouter route requests across providers to optimize for price and availability.

Networks such as Bittensor, Gensyn, and Prime Intellect coordinate distributed AI contributors through various mechanisms, including staking, peer evaluation, and collaborative training frameworks.

However, what remains structurally challenging in open compute markets is verifying the correctness of a specific computation. If correctness cannot be proven, the system inevitably falls back on trust.

Indeed, when tensor compute runs off-chain, the client typically cannot tell whether the full computation was executed, whether shortcuts were taken, or whether results were subtly degraded. While TEE-based approaches (e.g., EigenAI, and Cocoon) can provide hardware-level attestation of execution, they rely on expensive, datacenter-grade GPUs and ultimately anchor security in the silicon manufacturer, limiting openness and scalability. Without verifiable guarantees, even decentralized markets revert to trust, reputation, or long-term relationships: mechanisms that do not scale cleanly to open, permissionless environments.

Hellas is designed to address this missing layer: replacing implicit trust with verifiable guarantees for off-chain tensor compute, enabling efficient and liquid markets for AI computation.

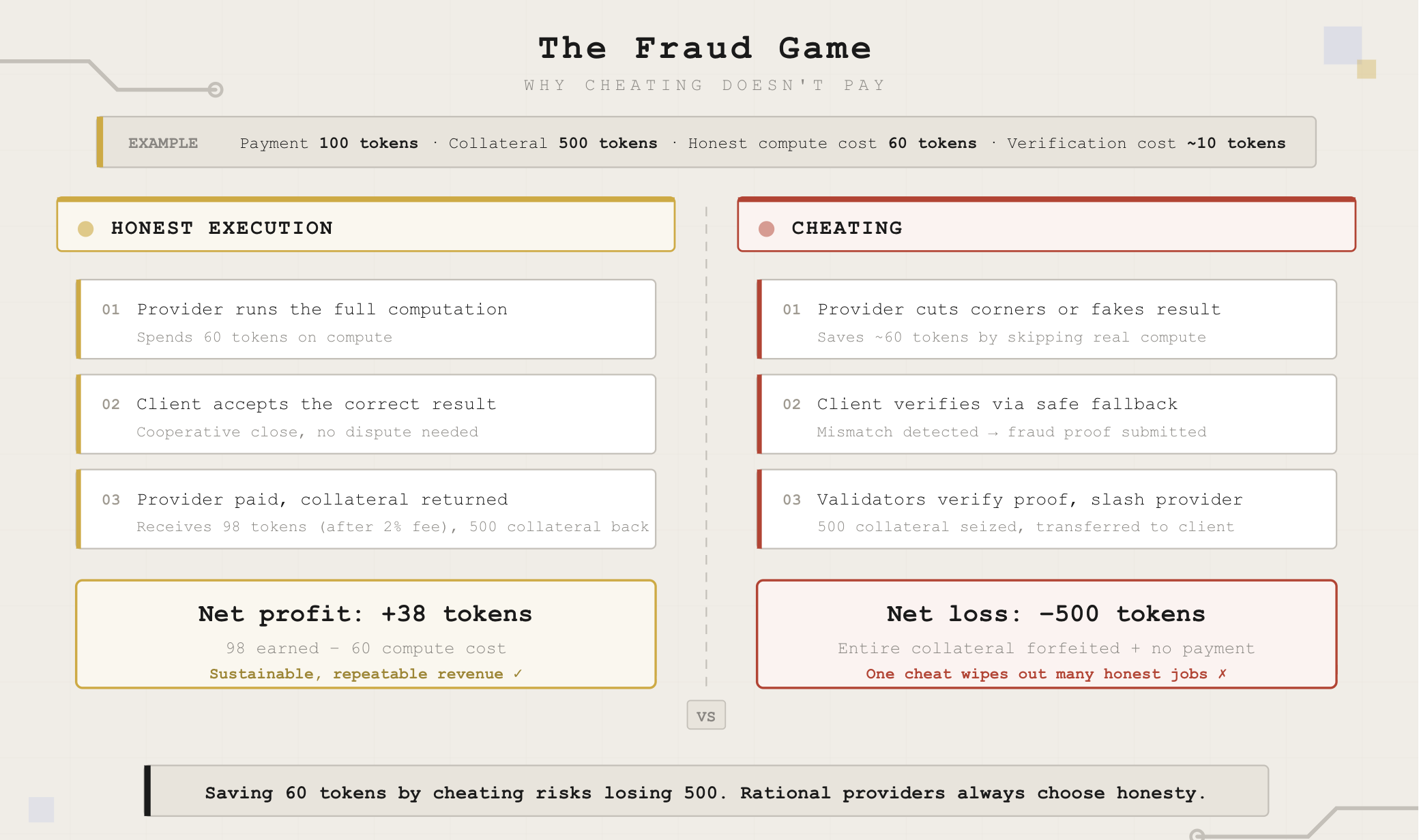

To achieve this, Hellas combines deterministic execution with fraud proofs. Incorrect execution of a specific job can be provably demonstrated and penalized on-chain, making individual computation results economically enforceable. The goal is not just to encourage honesty statistically, but to make cheating on any given job carry negative expected value.

Hellas and the Credible Threat of Verification

Hellas is a fully decentralized network for verifiable off-chain tensor compute. It connects clients who need computation with providers who supply it, and replaces trust with economic guarantees.

Central to Hellas is Catgrad, its core technological innovation. Catgrad is a categorical deep learning compiler that makes tensor compute deterministic across environments.

Determinism unlocks a powerful asymmetry. To prove correctness, one would need to verify the entire computation, which is prohibitively expensive for large model executions. To prove incorrectness, however, it is sufficient to demonstrate that a single sub-operation produced the wrong result. Catgrad enables exactly this: any faulty step can be pinpointed and turned into a succinct fraud proof.

Hellas builds on this primitive. Because any job can be verified, not every job needs to be verified. Computation happens off-chain, and only succinct commitments to the agreed computation and its outcome are recorded on-chain. The guarantee that cheating can be proven and penalized is sufficient to deter it.

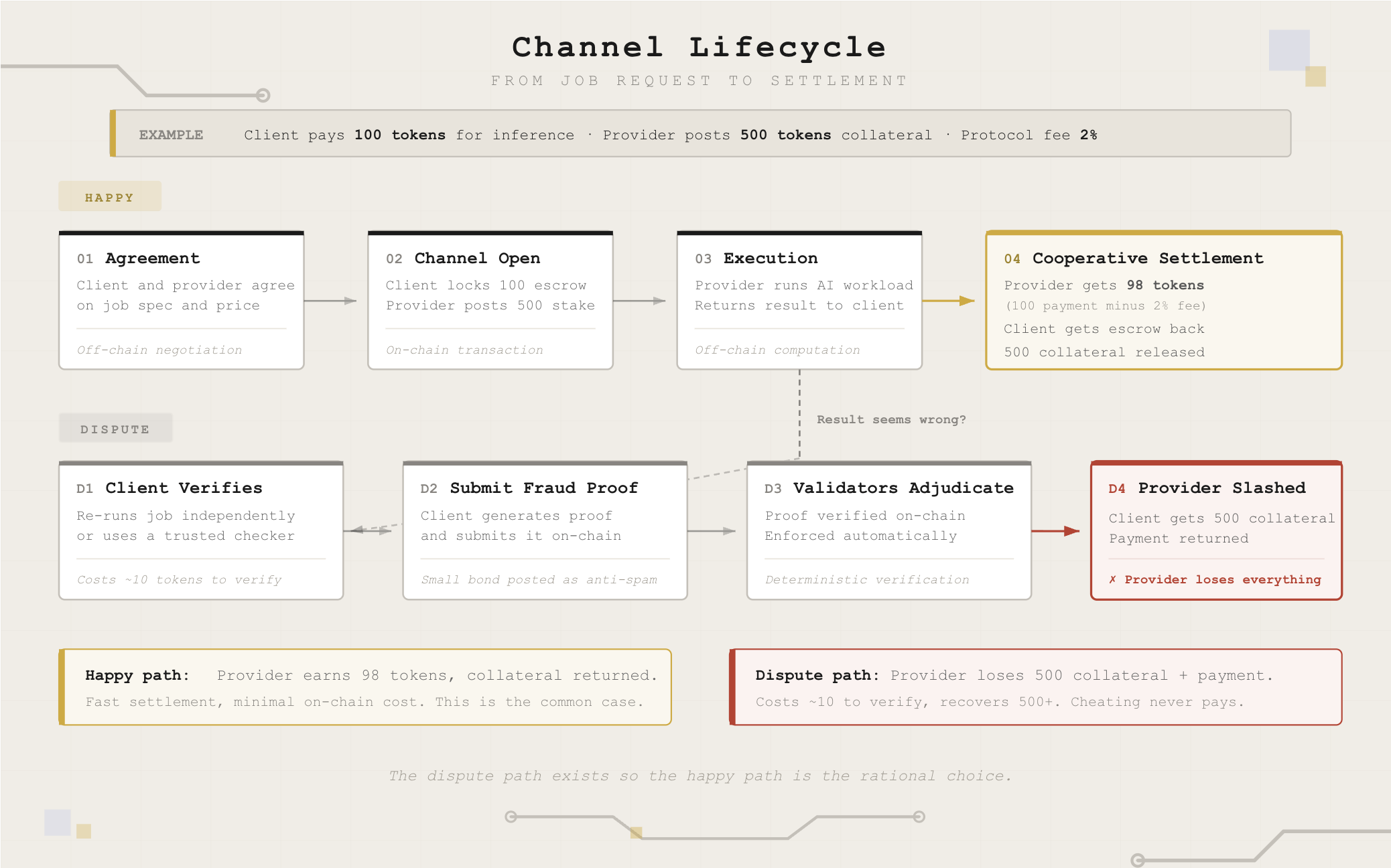

In practice, when a provider accepts a job, they must post collateral. If they return a correct result, they get paid and recover their collateral. If they return an incorrect result, the client has the means to prove it and claim the collateral.

Thus, Catgrad makes incorrect execution provable. Hellas enforces penalties severe enough to deter cheating. Together, they create a credible threat of verification that secures off-chain execution.

At CryptoEconLab we conducted a game-theoretic analysis of this mechanism, and here's what we found.

How Hellas Works

Hellas has three roles:

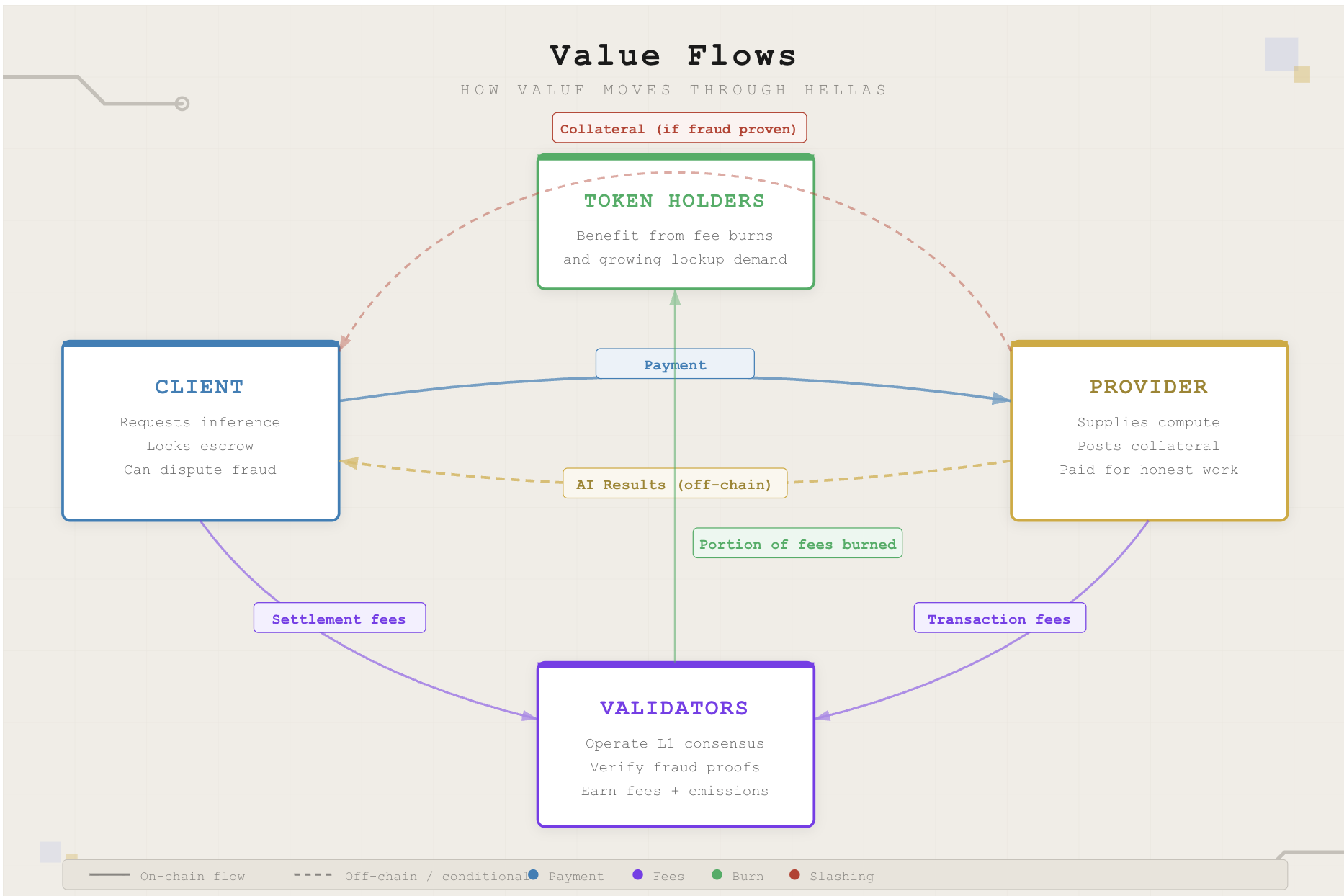

Clients request jobs, such as AI inference or training. They lock escrow to open a channel, receive results off-chain, and pay on settlement. If something goes wrong, provider's collateral guarantees compensation.

Providers supply compute. They post collateral for each job, execute off-chain, and earn payment when they deliver correctly. Honest work is profitable. Cheating risks losing everything.

Validators operate the Hellas L1. They finalize channel openings and closings, verify fraud proofs, and execute slashing when proofs are valid. Their revenue comes from the protocol emissions and fees on settlements.

In normal operation, this interaction is straightforward. A client opens a channel with a provider, paying a small fee to validators. Computation runs off-chain. The result is returned. Both parties sign off, the channel settles, the provider is paid, and collateral is released.

If something seems wrong, the client generates a fraud proof demonstrating incorrect execution and submits it on-chain. If the proof is valid, the original provider's collateral is slashed and transferred to the client, covering verification costs and more. This is the Fraud Game: the game-theoretic mechanism where the credible threat of verification renders cheating unprofitable.

Catgrad guarantees how correctness is proven: because execution is deterministic, any discrepancy between two runs can be traced and proven on-chain. The client has multiple ways to detect fraud: re-running the job themselves, requesting the same job from an independent provider, using domain-specific heuristics, or relying on an insurance service that specializes in catching fraud. As the network matures, this freedom creates a market for verification, and since catching fraud is profitable, specialized verification services may emerge. Actors who develop efficient detection methods can profitably serve clients, driving verification costs down over time and strengthening the system.

The result is a system where providers are deterred from cheating, verification improves with scale, and most jobs settle without dispute.

At CryptoEconLab, we formalized the incentive structure underlying this mechanism and built a simulation engine to stress test its behavior across a wide range of parameters, and conditions. The analysis confirms that, when properly calibrated, disputes remain worth pursuing and cheating carries negative expected value.

How Value Flows Through the System

When a channel is opened, the client pays a small protocol fee, and an additional fee is paid when the channel is closed, either after successful execution or after a dispute is resolved. These fees fund validators and are partially burned.

On a successful job, the provider is paid for compute and recovers their collateral. On a successful dispute, the provider's collateral compensates the client and the protocol enforces the outcome mechanically.

As usage grows, providers lock more collateral, clients lock more escrow, and fees scale with real economic activity. Token demand is driven by working capital requirements rather than speculation. Burned fees reduce circulating supply over time, mechanically linking token value to real network usage.

Thus, as the network grows, more jobs generate more fees, supporting validator security and accruing value in the token. More activity leads to more locked capital, increasing the economic weight of the system. At the same time, verification becomes cheaper as specialized checking services emerge.

Security improves with scale because incentives become stronger, not weaker.

Our analysis confirms these dynamics hold under a range of market conditions. The protocol parameters can be calibrated to ensure disputes are always worth pursuing and cheating never pays.

The Bottom Line

Clients get compute they can rely on without trusting the provider. Providers profit from honest work. Validators earn fees that grow with real usage.

Hellas enables a trust-minimized market for tensor compute. Off-chain execution is secured by on-chain guarantees, and economic incentives replace constant verification or centralized trust.

Want to dive deeper? Check out our research papers for the full technical analysis, or try the Parameter Calculator to see how the security model works under different assumptions.

Discuss This Article With AI

Get instant analysis and insights from leading AI assistants

How We Can Help

Interested in similar solutions for your project? Explore our related services: